Rapid improvements in robotic technologies are presenting both civilian policy makers and military leaders with uncomfortable ethical choices. The pace of change is even quicker than many imagine: California recently issued its 30th corporate permit to test autonomous vehicles on public roads.[1] Emerging artificial intelligence (AI) technologies offers impressive gains in military effectiveness, yet how do we balance their use with accountability for inevitable errors? Considering the history of major technological and conceptual advances, many of these tensions and choices are neither new, unique, nor conceptually unapproachable. The choices fall broadly into three categories: How much autonomy do we provide to autonomous weapons to maximize their military effectiveness? Who makes that decision? And perhaps most critically, who is held accountable when something inevitably goes wrong? Fortunately, current military thought, doctrine, and regulations already provide an effective and adaptable conceptual framework for these challenges.

Background

In its directive on Autonomy in Weapon Systems, the Department of Defense (DoD) describes an autonomous weapon system as one that “once activated, can select and engage targets without further intervention by a human operator.”[2] The DoD currently allows fully autonomous systems to operate in a defensive mode against “time-critical or saturation attacks” but places additional limitations on them such as prohibitions on targeting humans and on selecting new targets in the event of communications outages.[3] Technology has advanced rapidly since the initial 2012 publication of this document and even with the May 2017 update, the policy seems to accept autonomy in defensive and non-lethal systems but place additional restrictions on offensive systems.[4] For purposes of this discussion, a warbot goes a step further and is defined as an offensive robotic system that can “detect, identify, and apply lethal force to enemy combatants within prescribed parameters and without immediate human intervention.”[5] This expanded use of technology and autonomy come with significant risk but the cardinal issue remains: can we operationalize the tremendous benefits offered by warbots while continuing to constrain our war making within current ethical standards and accountability methods? This may not be as difficult of an issue as we might initially suspect.

Increased Autonomy: How Much?

The current debate over keeping a human in the loop or on the loop is useful but misses the larger issue in terms of military effectiveness.[6] The “Law of Armed Conflict does not preclude employing learning machines on the battlefield in accordance with jus in bello,” and there are many clear military advantages in using them.[7] Yet to maximize the effectiveness of humans and warbots, both require a degree of autonomy and must operate under similar constraints.

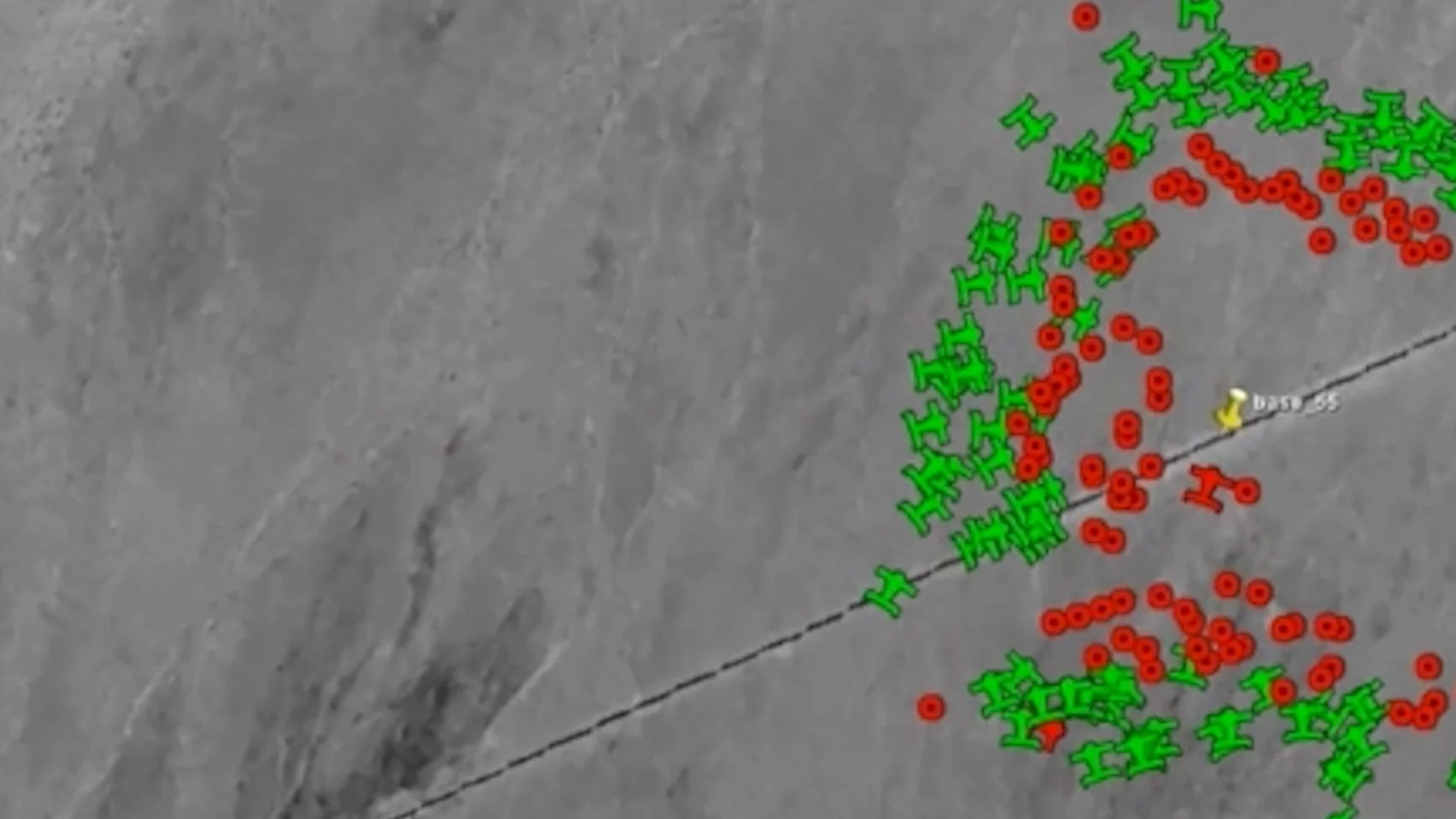

The Department of Defense and Naval Air Systems Command successfully demonstrated a swarm of 103 Perdix micro-drones swarm at China Lake, California. The swarm demonstrated significant components of autonomy: collective decision-making, adaptive formation flying, and self-healing. (Department of Defense)

In the case of humans in combat, they do not have unrestrained autonomy nor are they excused from accountability if their actions fall outside prescribed moral, legal, and ethical norms. Human combatants, and their use of the systems they man, direct, or operate remotely, are all constrained by approved rules of engagement, the laws of war, military regulations, command guidance, moral considerations, and the legal constraints of necessity and proportionality. Yet to be effective and accomplish their military mission, human combatants are granted a necessary, but not unlimited, degree of autonomy. This degree of autonomy is variable and increased or decreased based on numerous factors, some of which include the specifics of the assigned mission, the capabilities of the subordinate, assessed risks, the area of operations, and the degree to which non-combatants are present. An appropriate degree of autonomy in a dynamic and rapidly changing environment allows subordinates to “exercise disciplined initiative within the commander’s intent.”[8] Operating in these types of environments is a core competency of US military leaders and gives them, and their units a comparative advantage over more rigidly structured and less autonomous military forces.

...warbots will operate in a manner similar to humans in that they will operate under variable autonomy settings...

To maximize the effectiveness of warbots, they also require a degree of autonomy. The importance of this autonomy increases in proportion to the level of technology available relative to enemy systems. Not offering a competitive degree of autonomy to a warbotic combat vehicle will place it at a distinct disadvantage when fighting other faster-thinking machines that do not require human approval before executing firing solutions. However, the environments in which warbots will be used, their relative utility, and therefore the required degree of autonomy will vary dramatically based on the situation. An offensive across the deserts and mountains of Iran would look very different from a prolonged battle in a mega-city like Manila, and the differences would not only be in the types of forces encountered, but also in terrain, electromagnetic density, number and type of civilians present, and proximity to command nodes, to name a few. Consequently, warbots will operate in a manner similar to humans in that they will operate under variable autonomy settings. These could take a form of adjustable rules of engagement requiring higher degrees of certainty regarding targets before firing without human intervention, or instructions similar to air defense orders of “weapons hold” that allows firing only when fired upon, “weapons tight” that allows firing when a target has been positively identified as enemy, and “weapons free” when firing is allowed at anything not identified as friendly. Variable autonomy enables warbots to maximize their capabilities while also enabling their responses to be tailored as required to ensure low probabilities of collateral damage.

Who Decides?

The question of who makes these autonomy decisions is an interesting one because of the nature of military decision making itself. At what level does a decision need to be approved by a higher command? Too much centralization can both produce organizational paralysis in crisis situations while also limiting the ability of subordinates to exploit fleeting opportunities. Too little centralization and a force turns into a widely divergent organization that lacks a central nervous system and can operate akin to a disorganized mob. The US military takes a balanced approach to this challenge with centralized planning and decentralized execution, all within the commander’s intent. Leaders up and down the chain of command engage in dialogue to ensure decisions are made at the appropriate level. Just as it would be unwise to enable a lieutenant to use nuclear weapons, it would also be ineffective to require the president to approve every pull of a rifleman’s trigger. The military has rules of engagement and mechanisms in place to ensure that decisions, to include degrees of autonomy, are made and understood at different levels. This points back to the central role of the commander as the final, and accountable decision maker. Commanders must make the unenviable decisions regarding dangerous courses of action, collateral damage, degrees of acceptable risk, amount of autonomy, and the myriad of other necessary life and death choices. This has been the case with humans and technology so far, and remains true in the case of warbots. Commanders will continue to decide which weapon systems they employ, how much autonomy to give their human and robotic subordinates, and what restrictions to place on their forces to ensure larger strategic objectives are not compromised as a result of tactical missions.

Accountability

The robotic nightmare scenario might look like this: A future commander is informed that during a combat operation, multiple safeguards failed, and in the confusion of the battle, a recently fielded, AI-enabled attack helicopter mistakenly targeted a location with numerous civilians. Before the headquarters knew of the mistake, dozens were killed and wounded. The internet erupted with a number of prominent news sources quickly reporting on the carnage. The commander thought, “This is why we should never have given machines autonomy; I knew we should have kept a man in the loop...”

Yet replace “AI enabled attack helicopter” with “AC-130U gunship” and we have a brief description of the October 3, 2015 airstrike on the Medicines Sans Frontiers hospital in Kunduz.

An employee of Doctors Without Borders in October 2015 inside what remained of the organization’s hospital, destroyed in an airstrike that left 42 people dead. (Najim Rahim/AP)

The problem of accountability is perhaps not as difficult as it might initially seem. In the course of military operations, things inevitably go wrong, often with tragic consequences. Responsibility for failure is not delegable; it always resides with the commander who made a particular decision. Army Regulation 600-20 frames it this way: “Commanders are responsible for everything their command does or fails to do… Commanders who assign responsibility and authority to their subordinates still retain the overall responsibility for the actions of their commands.”[9]

Fortunately, the military already has established mechanisms in place to assess whether a tragic outcome was the result of a leader’s poor decision, a larger institutional failure (training, standards, regulations, etc.), a toxic command climate, equipment failure, acts of God, or a host of other factors. While there are a variety of forms, the most common formal investigations are called an AR 15-6 investigation by the Army, a Commander’s Investigation by the Air Force, and a JAGMAN by the Navy. These formal investigations can serve as the basis for both criminal and administrative punitive actions. These investigations have legal counsel and review, will have one or more investigators assigned for as long as necessary to bring the matter to conclusion, and have access to, or may even be assigned, technical experts as necessary. They are routinely used after accidental training deaths, grounding of ships, friendly fire incidents, collateral civilian deaths, and a host of other incidents. These investigations provide tools to ensure professional accountability and can result in a range of outcomes that can include the removal of leaders, criminal charges, institutional or equipment changes, and in some cases, exoneration, all of which are based on the facts of the situation.

These accountability tools apply to situations involving humans and current equipment and have been extended to incorporate new technologies such precision guided munitions. The extension of these accountability tools to warbots is a logical and prudent step to incorporate new technologies; it is not a leap into the unknown.

Conclusion

While the rapid advance of artificial intelligence and warbots has the potential to disrupt U.S. military force structures and employment methods, they offer great promise and are worth the risk. This is especially true as the conceptual mechanisms for providing variable autonomy and direct accountability are already in place. Commanders will retain their central role in determining the level of variable autonomy given to subordinates, whether human or warbot, and will continue to be held accountable when things inevitably go wrong for either.

Brian M. Michelson recently retired from the US Army after a 30 year career. His first book, "Warbot 1.0: AI Goes to War" will be released on 1 June 2020 and is currently available for pre-order on Amazon.

Have a response or an idea for your own article? Follow the logo below, and you too can contribute to The Bridge:

Enjoy what you just read? Please help spread the word to new readers by sharing it on social media.

Header Image: Morals and the Machine (Derek Bacon/The Economist)

Notes:

[1] Andy Kessler. “Bad Intelligence Behind the Wheel,” The Wall Street Journal, April 24, 2017, A15.

[2] Department of Defense. Department of Defense Instruction Number 3000.09 Change 1, Autonomy in Weapon Systems, Department of Defense, May 8, 2017, accessed May 12, http://www.dtic.mil/whs/directives/corres/pdf/300009p.pdf.

[3] Ibid.

[4] Ibid.

[5] Brian M. Michelson. “Blitzkrieg Redux: The Coming Warbot Revolution,” The Strategy Bridge, February 28, 2017, accessed April 6, 2017, https://thestrategybridge.org/the-bridge/2017/2/28/blitzkrieg-redux-the-coming-warbot-revolution

[6] Sydney J. Freedberg Jr. and Colin Clark. “Killer Robots? ‘Never,’ Defense Secretary Carter Says,” Breaking Defense, September 15, 2016, accessed May 12, 2017, http://breakingdefense.com/2016/09/killer-robots-never-says-defense-secretary-carter/

[7] Brent D. Sadler. “Fast Followers, Learning Machines, and the Third Offset Strategy.” Joint Forces Quarterly, 4th Quarter, 2016, 16.

[8] U.S. Army, Army Doctrinal Publication 6-0 change 2, Mission Command, (Washington, D.C., Department of the Army, March 12, 2014), iv.

[9] U.S. Army, Army Regulation 600-20, Army Command Policy, Headquarters, (Washington, D.C., Department of the Army, November 6, 2014), 6